Numerical analysis

From Wikipedia, the free encyclopedia

| This article includes a list of references, but its sources remain unclear because it has insufficient inline citations. (November 2013) (Learn how and when to remove this template message) |

Numerical analysis is the study of algorithms that use numerical approximation (as opposed to general symbolic manipulations) for the problems of mathematical analysis(as distinguished from discrete mathematics).

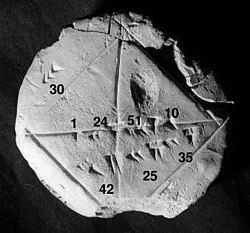

One of the earliest mathematical writings is a Babylonian tablet from the Yale Babylonian Collection (YBC 7289), which gives a sexagesimal numerical approximation of thesquare root of 2, the length of the diagonal in a unit square. Being able to compute the sides of a triangle (and hence, being able to compute square roots) is extremely important, for instance, in astronomy, carpentry and construction.[2]

Numerical analysis continues this long tradition of practical mathematical calculations. Much like the Babylonian approximation of the square root of 2, modern numerical analysis does not seek exact answers, because exact answers are often impossible to obtain in practice. Instead, much of numerical analysis is concerned with obtaining approximate solutions while maintaining reasonable bounds on errors.

Numerical analysis naturally finds applications in all fields of engineering and the physical sciences, but in the 21st century also the life sciences and even the arts have adopted elements of scientific computations. Ordinary differential equations appear in celestial mechanics (planets, stars and galaxies); numerical linear algebra is important for data analysis; stochastic differential equations and Markov chains are essential in simulating living cells for medicine and biology.

Before the advent of modern computers numerical methods often depended on hand interpolation in large printed tables. Since the mid 20th century, computers calculate the required functions instead. These same interpolation formulas nevertheless continue to be used as part of the software algorithms for solving differential equations.

Contents

[hide]General introduction[edit]

The overall goal of the field of numerical analysis is the design and analysis of techniques to give approximate but accurate solutions to hard problems, the variety of which is suggested by the following:

- Advanced numerical methods are essential in making numerical weather prediction feasible.

- Computing the trajectory of a spacecraft requires the accurate numerical solution of a system of ordinary differential equations.

- Car companies can improve the crash safety of their vehicles by using computer simulations of car crashes. Such simulations essentially consist of solving partial differential equations numerically.

- Hedge funds (private investment funds) use tools from all fields of numerical analysis to attempt to calculate the value of stocks and derivatives more precisely than other market participants.

- Airlines use sophisticated optimization algorithms to decide ticket prices, airplane and crew assignments and fuel needs. Historically, such algorithms were developed within the overlapping field of operations research.

- Insurance companies use numerical programs for actuarial analysis.

The rest of this section outlines several important themes of numerical analysis.

History[edit]

The field of numerical analysis predates the invention of modern computers by many centuries. Linear interpolation was already in use more than 2000 years ago. Many great mathematicians of the past were preoccupied by numerical analysis, as is obvious from the names of important algorithms like Newton's method, Lagrange interpolation polynomial, Gaussian elimination, or Euler's method.

To facilitate computations by hand, large books were produced with formulas and tables of data such as interpolation points and function coefficients. Using these tables, often calculated out to 16 decimal places or more for some functions, one could look up values to plug into the formulas given and achieve very good numerical estimates of some functions. The canonical work in the field is the NIST publication edited byAbramowitz and Stegun, a 1000-plus page book of a very large number of commonly used formulas and functions and their values at many points. The function values are no longer very useful when a computer is available, but the large listing of formulas can still be very handy.

The mechanical calculator was also developed as a tool for hand computation. These calculators evolved into electronic computers in the 1940s, and it was then found that these computers were also useful for administrative purposes. But the invention of the computer also influenced the field of numerical analysis, since now longer and more complicated calculations could be done.

Direct and iterative methods[edit]

Direct vs iterative methods

Consider the problem of solving

for the unknown quantity x.

For the iterative method, apply thebisection method to f(x) = 3x3 − 24. The initial values are a = 0, b = 3, f(a) = −24, f(b) = 57.

We conclude from this table that the solution is between 1.875 and 2.0625. The algorithm might return any number in that range with an error less than 0.2.

Discretization and numerical integration[edit]

In a two-hour race, we have measured the speed of the car at three instants and recorded them in the following table.

A discretization would be to say that the speed of the car was constant from 0:00 to 0:40, then from 0:40 to 1:20 and finally from 1:20 to 2:00. For instance, the total distance traveled in the first 40 minutes is approximately(2/3 h × 140 km/h) = 93.3 km. This would allow us to estimate the total distance traveled as 93.3 km +100 km + 120 km = 313.3 km, which is an example of numerical integration (see below) using aRiemann sum, because displacement is the integral of velocity.

Ill-conditioned problem: Take the function f(x) = 1/(x − 1). Note thatf(1.1) = 10 and f(1.001) = 1000: a change in x of less than 0.1 turns into a change in f(x) of nearly 1000. Evaluating f(x) near x = 1 is an ill-conditioned problem.

Well-conditioned problem: By contrast, evaluating the same function f(x) = 1/(x − 1) near x = 10 is a well-conditioned problem. For instance, f(10) = 1/9 ≈ 0.111 andf(11) = 0.1: a modest change in xleads to a modest change in f(x).

|

Direct methods compute the solution to a problem in a finite number of steps. These methods would give the precise answer if they were performed in infinite precision arithmetic. Examples include Gaussian elimination, the QR factorization method for solving systems of linear equations, and the simplex method of linear programming. In practice, finite precision is used and the result is an approximation of the true solution (assuming stability).

In contrast to direct methods, iterative methods are not expected to terminate in a finite number of steps. Starting from an initial guess, iterative methods form successive approximations that converge to the exact solution only in the limit. A convergence test, often involving the residual, is specified in order to decide when a sufficiently accurate solution has (hopefully) been found. Even using infinite precision arithmetic these methods would not reach the solution within a finite number of steps (in general). Examples include Newton's method, the bisection method, and Jacobi iteration. In computational matrix algebra, iterative methods are generally needed for large problems.

Iterative methods are more common than direct methods in numerical analysis. Some methods are direct in principle but are usually used as though they were not, e.g.GMRES and the conjugate gradient method. For these methods the number of steps needed to obtain the exact solution is so large that an approximation is accepted in the same manner as for an iterative method.

Discretization[edit]

Furthermore, continuous problems must sometimes be replaced by a discrete problem whose solution is known to approximate that of the continuous problem; this process is called discretization. For example, the solution of a differential equation is a function. This function must be represented by a finite amount of data, for instance by its value at a finite number of points at its domain, even though this domain is a continuum.

Generation and propagation of errors[edit]

The study of errors forms an important part of numerical analysis. There are several ways in which error can be introduced in the solution of the problem.

Round-off[edit]

Round-off errors arise because it is impossible to represent all real numbers exactly on a machine with finite memory (which is what all practical digital computers are).

Truncation and discretization error[edit]

Truncation errors are committed when an iterative method is terminated or a mathematical procedure is approximated, and the approximate solution differs from the exact solution. Similarly, discretization induces a discretization error because the solution of the discrete problem does not coincide with the solution of the continuous problem. For instance, in the iteration in the sidebar to compute the solution of , after 10 or so iterations, we conclude that the root is roughly 1.99 (for example). We therefore have a truncation error of 0.01.

Once an error is generated, it will generally propagate through the calculation. For instance, we have already noted that the operation + on a calculator (or a computer) is inexact. It follows that a calculation of the type is even more inexact.

What does it mean when we say that the truncation error is created when we approximate a mathematical procedure? We know that to integrate a function exactly requires one to find the sum of infinite trapezoids. But numerically one can find the sum of only finite trapezoids, and hence the approximation of the mathematical procedure. Similarly, to differentiate a function, the differential element approaches zero but numerically we can only choose a finite value of the differential element.

Numerical stability and well-posed problems[edit]

Numerical stability is an important notion in numerical analysis. An algorithm is called numerically stable if an error, whatever its cause, does not grow to be much larger during the calculation. This happens if the problem is well-conditioned, meaning that the solution changes by only a small amount if the problem data are changed by a small amount. To the contrary, if a problem is ill-conditioned, then any small error in the data will grow to be a large error.

Both the original problem and the algorithm used to solve that problem can be well-conditioned and/or ill-conditioned, and any combination is possible.

So an algorithm that solves a well-conditioned problem may be either numerically stable or numerically unstable. An art of numerical analysis is to find a stable algorithm for solving a well-posed mathematical problem. For instance, computing the square root of 2 (which is roughly 1.41421) is a well-posed problem. Many algorithms solve this problem by starting with an initial approximation x0 to , for instance x0 = 1.4, and then computing improved guesses x1, x2, etc. One such method is the famous Babylonian method, which is given by xk+1 = xk/2 + 1/xk. Another method, which we will call Method X, is given by xk+1 = (xk2 − 2)2 + xk.[3] We have calculated a few iterations of each scheme in table form below, with initial guesses x0 = 1.4 and x0 = 1.42.

| Babylonian | Babylonian | Method X | Method X |

|---|---|---|---|

| x0 = 1.4 | x0 = 1.42 | x0 = 1.4 | x0 = 1.42 |

| x1 = 1.4142857... | x1 = 1.41422535... | x1 = 1.4016 | x1 = 1.42026896 |

| x2 = 1.414213564... | x2 = 1.41421356242... | x2 = 1.4028614... | x2 = 1.42056... |

| ... | ... | ||

| x1000000 = 1.41421... | x27 = 7280.2284... |

Observe that the Babylonian method converges quickly regardless of the initial guess, whereas Method X converges extremely slowly with initial guess x0 = 1.4 and diverges for initial guess x0 = 1.42. Hence, the Babylonian method is numerically stable, while Method X is numerically unstable.

- Numerical stability is affected by the number of the significant digits the machine keeps on, if we use a machine that keeps only the four most significant decimal digits, a good example on loss of significance is given by these two equivalent functions

- If we compare the results of

- and

- by looking to the two results above, we realize that loss of significance (caused here by catastrophic cancelation) has a huge effect on the results, even though both functions are equivalent, as shown below

- The desired value, computed using infinite precision, is 11.174755...

- The example is a modification of one taken from Mathew; Numerical methods using Matlab, 3rd ed.

No comments:

Post a Comment